📚 Books I read in January 2026

Two great books contended for the highlight of the month, but eventually I had to pick one. Besides those, a couple of fiction books worth reading completed the list for January.

Here we are with the January edition of the books I read last month!

If you end up reading one of them, please let me know in the comments section.

💫 Book Highlight: The AI Con by Emily M. Bender & Alex Hanna

The AI Con, by Emily M. Bender & Alex Hanna

288 pages, First Published: May 13, 2025

One of the first books I read in 2026 had been on the reading list for a long time in 2025. Finally, I picked it up on January 6th and filled this shameful gap in my education.

The best way to summarise the main point from the book is to take a quote straight from chapter 1, appropriately titled An Introduction to AI Hype:

Artificial Intelligence, if we’re being frank, is a con: a bill of goods you are being sold to line someone’s pockets. A few major well-placed players are poised to accumulate significant wealth by extracting value from other people’s creative work, personal data, or labor, and replacing quality services with artificial facsimiles.

In other words, if the title wasn’t clear enough, this book is a very opinionated piece on what the current industry around AI is: its blatant promises, the concentration of power and wealth, the exploitation of other people’s data, work, or the planet’s resources. And ultimately a vehicle for increased enshittification1 of humans’ lives.

The authors are not afraid of taking sides, but they are at least trying to be as rigorous as one can possibly be in going through the sources, papers, and footnotes.

The book’s origins are to be found in a podcast hosted by the same authors, which goes by the telling name Mystery AI Hype Theater 30002.

What I like about the book is its holistic take on the industry and supply chain that is being sustained by the Hype around AI and its potential. The authors go back to the origins of the term AI in the 1950’s to identify patterns that might sound very familiar to those living in current times:

They relied on huge claims with little to no empirical support, bad citation practices, and moving goalposts to justify their projects, which found purchase in Cold War America. These are the same set of practices that we see from today’s AI boosters, although they are now primarily chasing market valuations, in addition to government defense contracts.

The book covers a lot of ground.

From the embarrassing ties between a lot of the fundamental science around measuring intelligence and racist and eugenics theories, to the parallel with the Luddites pushing back on technology being used to enrich the few while degrading the working conditions of most people.

It touches on how training LLMs relies on a large amount of low-paid gig workers in the Majority World, who are developing all kinds of mental health issues as a consequence.

Or how automated decisions tend to amplify bias and injustice.

Also, how LLMs are fundamentally trained on stolen material and are essentially large-scale plagiarism machines.

Or how AI is being used to undermine the fundamentals of education behind a shiny patina of innovation.

I’ve covered the topic of copyright theft in a recent article, which was partly informed by what I was reading in The AI Con at that time.

In that article, I mentioned a quote from Marc Andreessen on the potential impact of requiring the AI industry to follow existing copyright laws

It was only later that I remembered that I originally found it in this book.

Here’s how Bender and Hanna report it:

For AI boosters, the threat of these lawsuits is existential. And, frankly, we welcome that. Venture capital firm Andreessen Horowitz warned that all of their investments in AI would be worth a lot less if they had to abide by copyright law: “Imposing the cost of actual or potential copyright liability on the creators of AI models will either kill or significantly hamper their development.”3

I found the parts dedicated to explaining how AI-doomerists, those who believe a superintelligence will lead to human extinction, are just a different manifestation of AI-boosterists, a bit less interesting. Mainly because it added little to what I knew already about the topic, but it might be helpful for others less familiar with it.

Still, the main thesis about this part is one I fully subscribe to.

We should stop being distracted by speculative long-term risks of AI getting out of control, and focus on the real issues and harm it’s causing in the world, here and now.

In line with this, the book is not all doom and gloom.

The last chapter focuses on actions people can take to resist and influence the regulation of technology in to really benefit humanity at large.

Very much in line with some of the discussions and actions I’m looking forward to addressing in the Luddites in Tech group, announced a few weeks back.

You’ll find more reference to it and a link later in the article.

📚 Other Books I Read in January

On Tyranny by Timothy Snyder

On Tyranny, by Timothy Snyder

127 pages, First Published: February 28, 2017

Another book that has been on my list for quite some time, and I’m glad I finally picked it up.

It’s a bit of irony that I finished it on January 6th, the anniversary of one of the most shameless and blatant attacks on democracy of our recent history4.

I must admit that On Tyranny was a strong contender for the highlight of the month slot. Ultimately, I went for The AI Con because I’m assuming it’s the most relevant choice for my readership. Feel free to prove me wrong via your messages or comments.

The basic idea behind Snyder’s book is the following: we can draw plenty of lessons, twenty to be precise, from the darkest moment of the Twentieth’s century history, namely the rise of various forms of totalitarian regimes. Those lessons are very relevant in today’s world, and could serve as a powerful way to resist the forces that are threatening democracy in the Twenty-First century.

It’s a short book that reads quickly, but triggers a lot of thoughts and reflections. It was written as a reaction to the first Trump presidential mandate, but it’s every bit as relevant, if not more, now that we’re in its second term.

Most people might be familiar with the first lesson it contains, Do not obey in advance, as it’s often quoted to invite people to come out of passive acceptance, and engage with active resistance.

The anticipatory obedience of Austrians in March 1938 taught the high Nazi leadership what was possible.

Among the twenty lessons, the three that follow are some of my favorites.

Not because I find them easier or I like them more, but because they’re relevant to my daily life. Ultimately, all twenty lessons are insightful and relevant, but consider this as my personal priority list.

#2 Defend institutions

It is institutions that help us to preserve decency. They need our help as well. Do not speak of “our institutions” unless you make them yours by acting on their behalf. […] So choose an institution you care about—a court, a newspaper, a law, a labor union—an take its side.5

How often have we heard that institutions are obstacles, inefficient, or straight-out enemies of progress and prosperity? Radical techno-optimists, vulture capitalists, and power-hungry tyrants from all around the world are beating on this same drum.

When institutions are presented as obstacles, we should always ask ourselves these questions: To whom? Why were they established to begin with? Who will benefit the most from their dismantling?

The following quote resonated loudly:

The mistake is to assume that rulers who came to power through institutions cannot change or destroy those very institutions—even when that is exactly what they have announced that they will do.

The book was written in 2017, but I couldn’t have thought of a better prediction for what DOGE will set out to achieve seven years later.

#5 Remember professional ethics

When political leaders set a negative example, professional commitments to just practice become more important.

This one hit very close to home.

I’ve been reflecting a lot in the past months on where the tech industry is going, its ties to the political establishment that is hitting the foundations of democracy, and how to deal with it.

First, in an article that focused on the perceived inevitable evolution of the leadership role in technology.

And then in a more general article about putting principles ahead of profits, especially when those profits stink of collaborationism.6

In this same article, I shared an invite to join me in establishing a Luddites in Tech community. While reading On Tyranny, I found a very compelling explanation of why I felt such a strong drive:

Professions can create forms of ethical conversation that are impossible between a lonely individual and a distant government.[…] Professional ethics must guide us precisely when we are told that the situation is exceptional.

We’re living through exceptional times, therefore we should up the game on our professional ethics.

The Luddites in Tech community is coming together.

You are free to join it whenever you want to contribute to the conversation. For now, it’s a simple space on the matrix.org network7. You can join us by following this link.

#15 Contribute to good causes

Be active in organizations, political or not, that express your own view of life. Pick a charity or two and set up autopay. Then you will have made a free choice that supports civil society and helps others to do good.

Don’t be put off by people telling you this is just virtue-signaling8.

It’s still a good thing to do.

There are many ways in which we can contribute to good causes, including:

Donating to charities

Contributing to Open Source Projects

Actively contributing to humanitarian causes

Selecting service providers that directly or indirectly support good causes, or at least are not directly harming them

The following quote from the book is another one that captured my attention.

[…] one element of freedom is the choice of associates, and one defense of freedom is the activity of groups to sustain their members.

I.e., participating in good causes is also a way of associating with others.

In a time when we’re increasingly pushed to develop anti-social relationships with AI-friends, AI-partners, or AI-coaches, engaging with real humans is in and of itself an act of resistance.

Cryptonomicon by Neal Stephenson

Cryptonomicon, by Neal Stephenson

1152 pages, First Published: May 1, 1999

I’ve already spent a lot of words on the first two books. I’ll try to be brief on the remaining two.

Cryptonomicon is another masterpiece from Stephenson. It’s a book that is arguably written by a nerd, for nerds. The nerds of the late 90s, early 2000s, to be more precise.

It combines events that took place in WWII with the story of early developments of the Internet and the World Wide Web. From WWII to WWW, you might want to say.

It’s full of references to the hacker culture from those days, and it even includes a bespoke cryptographic algorithm specifically developed for the book by Bruce Schneier9.

In pure Stephenson’s style, characters in this book have family ties with those described in the Baroque Trilogy, taking place some three centuries before the events described in Cryptonomicon.

If you don't know what the Baroque Trilogy is, check it out below.

Cryptonomicon is a long book, but it is worth every page.

Unless you can’t stand good stories, complex characters, a good dose of humor, and nerdy references.

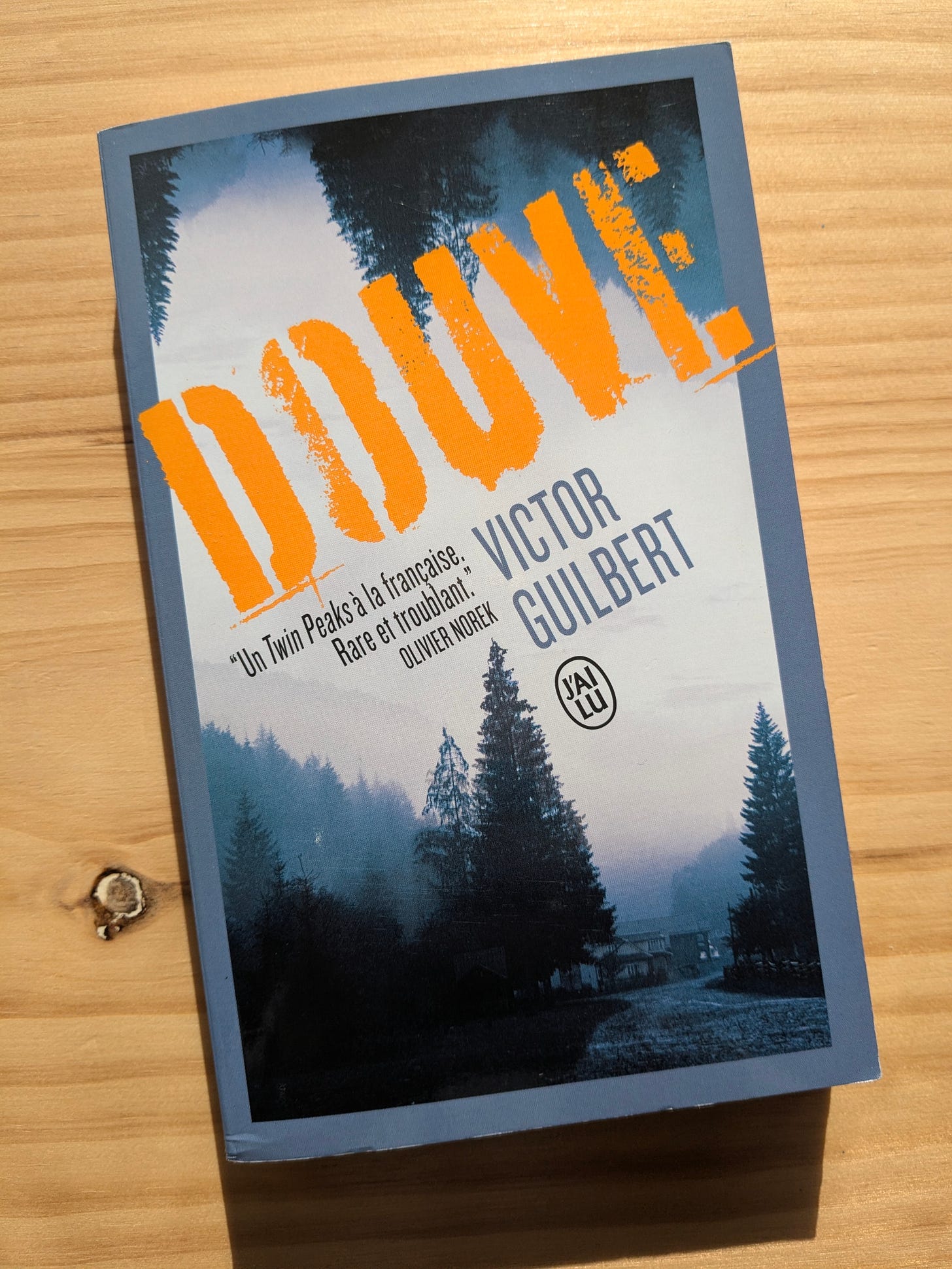

Douve by Victor Guilbert

Douve, by Victor Guilbert

298 pages, First Published: January 7, 2021

Finally, this is one of the last books I picked up during our summer trip in France. It was a free copy I got for buying a bunch of other books. It’s the first thriller novel from Guilvert, an author I didn’t know until recently.

What made me choose it among the available free options is the fact that it has a blurb from Olivier Norek on its cover.

I was not disappointed and fully enjoyed the reading experience. Apparently, this book turned into a series of three volumes, all covering the investigations of the main character, Hugo Boloren.

I guess I’ll need to find the other two soon.

WIT Promo for Q1 2026

I’ve recently decided to resume offering Quarterly promos for people who are willing to benefit from my services.

I’m happy to announce that I’ve opened up the Q1 promo that will run until the end of March 2026.

I’m making it easier for Women in Tech to level up their engineering leadership skills by offering an exclusive discount to the Sudo Make Me a CTO: 30% off for the first 12 months.

You can find out all the details at the official promo page, or by clicking the button below.

Feel free to share this opportunity with people you know, and do not hesitate to reach out if you’d like to learn more about it.

You can always schedule a free 30-minute session to get all your questions addressed.

Looking forward to seeing the community grow with more diversity.

Fun fact: I hesitated for a moment on whether I should read Cory Doctorow’s book Enshittification or pick up The AI Con first. I hope Doctorow will not hold it against me that I went the other way.

You can find it here. I don’t always appreciate the dismissive tone used occasionally by the hosts and authors, but I found it a great companion to the book.

The book credits this article as the original source for the quote.

Before you scream “em-dashes, this is AI slop”: the em-dash predates the advent of LLM-generated crap, thanks god, and was used in the printed version of the book.