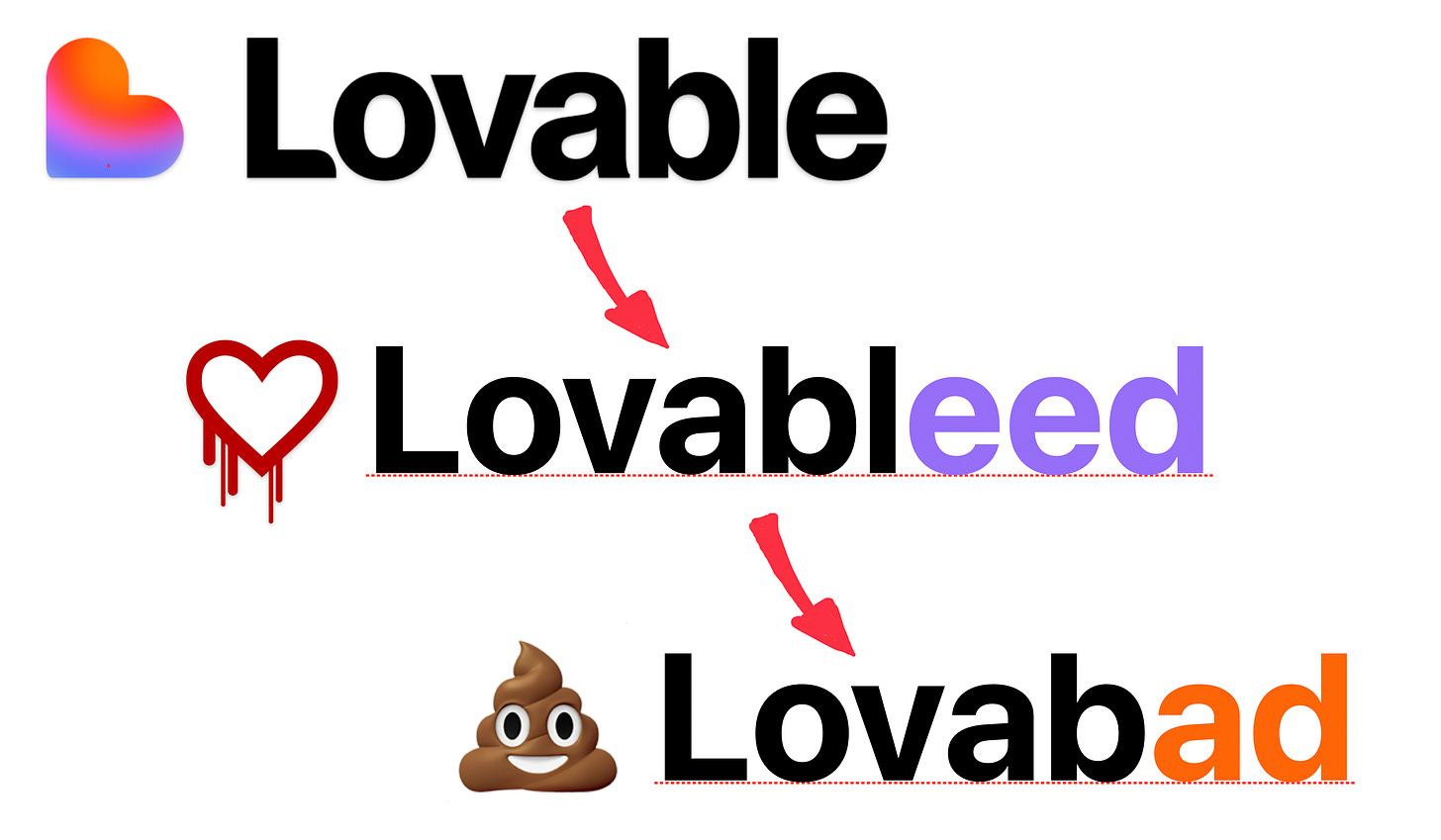

Lovable, Lovableed, Lovabad

How a potentially easy-to-handle security issue turned into a complete PR disaster for the hyped European AI-startup Lovable.

Some of you might have heard of the latest security incident that affected Lovable, the cool and hyped $6.6 billion startup allowing anyone to create websites and apps by chatting with AI1.

Now, security issues can happen to anyone, no matter whether you’re a bootstrapped garage startup, an established corporation, or a hyped AI startup flush with VC cash. We should never, ever cheer, rejoice, or otherwise celebrate such incidents.

First of all, because they’re not necessarily a consequence of misconduct on the company's side. Cybersecurity is hard, and anyone can make mistakes in this space2.

Secondly, and most importantly, because the main victims are always the users whose data, credentials or sometimes money have been compromised.

In other words, such incidents should be taken very seriously, which includes two things

Treating security researchers with respect3.

Treating users with respect, which means taking care of their data with a high degree of urgency, and being transparent in communications with them.

That’s why I’m interested in the Lovable case.

Not because through the security incident Lovable went all the way to Lovableed4.

But because due to the way they handled the issue, they went from Lovableed straight to Lovabad.

And for those of you who need context, this is what happened.

A chronology of the events

I’ve consulted a few sources to get a sense of the sequence of events. The ones I recommend, if you want to get into the details, are the following:

This article from The Register

This article from Cybernews

This article from Cyberpress

This is a short summary of what happened

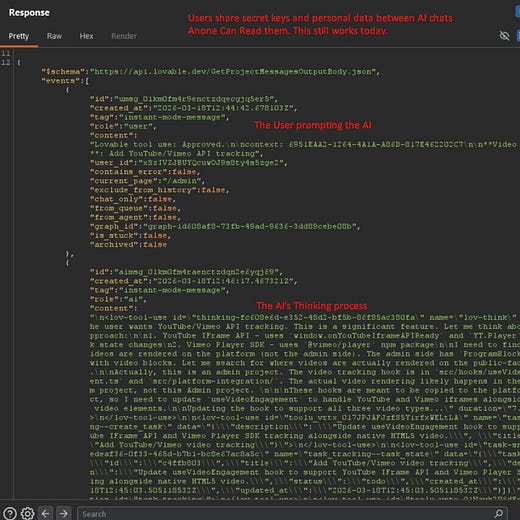

Until May 2025, free-tier users could only create public projects. What that meant was that both code and chat history for those projects would be publicly accessible to any other user.

In May 2025 Lovable allowed free-tier users to make private projects while at the same time turning off the possibility for enterprise customers to create any public project. Public was still the default for private users.

Projects remained private by default until December 2025. At the same time, Lovable supposedly patched their systems so that chats for public projects wouldn’t be accessible to external users.

In February, a change in Lovable’s backend code restored the possibility to access chats, too.

This regression was first reported on March 3rd, and Lovable patched the issue for new projects. But they did not patch it for existing projects

On April 20th a security researcher who goes by the handle @weezerOSINT reported the issue on twitter after seeing their report closed as duplicate

What happened next is a perfect example of how not to handle a security incident as a tech company.

From Lovableed to Lovabad

Lovable first reaction?

Deny the claims!

The public statement is quite clear:

To be clear: We did not suffer a data breach.

And then they go on with a fuzzy and only mildly apologetic explanation about how the issue was… the documentation!

The real problem with that: it wasn’t true. The vulnerability and the leak were real, and they seemed to have dismissed the report without even taking the time to check whether it was substantiated or not.

One might wonder if over-exposure to AI slop made Lovable's employees treat anything they read online as hallucination and false. That would at least explain something.

A few hours later, however, someone seemed to realise they had completely messed up their reading of the situation, and Lovable published a clarifying statement.

Which, I can only guess, was supposed to serve as an apology and clarification.

Once you're done laughing, please read on, as there are two main things I would like to point out from the embarrasing statement.

When you create a project on GitHub, you can make it private or public. Lovable worked the same. Users had a “Public” or “Private” option right in the chatbox. A public project meant the entire project was public, both chat and code. “Just like a public project on GitHub,” we thought.

That’s naive and borderline criminal.

Treating the chat at the same level as the code means these folks either have no idea about how users use chatbots or the amount of crazy sensitive and personal details shared in there. Either that, or they’re just acting in bad faith. Yes, this is a perfect example of Hanlon’s razor, and I tend to believe its default conclusion: incompetence.

I would only accept that as an excuse if it was clearly stated, 'We have no idea what we're doing, and you shouldn't trust us with your data.’

But that wasn't the case here, so excuse not accepted.

But the incompetence doesn't seem to stop here, as the statement continues with

This was reported through our vulnerability disclosure program (via HackerOne). Unfortunately, the reports were closed without escalation because our HackerOne partners thought that seeing public projects’ chats was the intended behaviour.

So this is HackerOne’s fault… except that it wasn’t them who published a public statement denying the claims from the researcher.

Even if it was genuinely HackerOne’s fault, which I seriously question, I would have expected two things from Lovable.

That they would have taken the blame, as this is about their users, rather than finger-pointing a vendor.

That they would have checked thoroughly the nature of the researcher’s report before publicly denying it.

They did none of the two. Instead, the best they were able to come up with to take accountability for the embarrassing display of incompetence and arrogance, is the following:

We understand that pointing to documentation issues alone was not enough here. We’ll do better.

We’ll do better?

Seriously?

We don’t accept such hollow promises from our five-year-old kids, as we know they’re meaningless without a clear plan and detailed list of what exactly they’ll be doing differently next time.

It would be so easy to do better than that.

It would have been enough to say something along these lines

Dear users and customers, we screwed up.

Not only we demonstrated a profound lack of understanding of security and privacy in our initial design of the public projects.

Not only we reintroduced the issue after fixing it, most likely because someone accepted a vibe-coded patch without understanding its full implications.

We even denied the claims from a well-meaning researcher without taking the time to review them thoroughly as they would have deserved.

All the hype, attention and celebrity go to our head, and we lost sight of humbleness. Hubris led us to not take security seriously, and assume we’d be always right.

This realization has prompted a serious discussion internally and as a consequence we’re going to review our entire security posture, from internal controls to how we deal with the cybersecurity community at large.

We apologise to our users, and commit to sharing transparent and detailed updates on our way to improve security at least once per quarter.

There, you have it.

It just needs to be genuine, honest and credible5.

Your customers will respect you a lot more if you take accountability.

They will trust you with their data more if you’re transparent about your shortcomings.

They might even stay with you instead of leaving for one of the gazillions of existing competitors if you transparently shared your detailed plans for making real and concrete improvement.

In a time of excessive focus on “Intelligence”, a little bit of self-reflection6 and caring about your users and prioritising both above your ego can go a long way in making you stand out from the pack.

Especially when your brand relates to emotions.

Will Lovabad turn into Lovaback?

The ball is in their camp.

Verbatim quote from their landing page.

That said, I can hear you loud and clear if you’re already wondering how a company valued at such an insane amount and poised to be at the forefront of technology could fall victim to such an apparently trivial oversight. Just bear with me for a second.

I mean real, serious, competent researchers. Not the rookies spamming companies with slop reports looking for quick gains.

I might be the first one using this word. I couldn’t find any prior references to it online. You’re free to use it however you want, but if you can, please share this article too. The name is obviously inspired by the (in)famous Heartbleed vulnerability, which had massive consequences for the industry.

Which means the last thing you want to do is to ask AI to generate it for you.

Don't be afraid of making Marc Andreessen upset with your introspection; he'll still give you plenty of money if he thinks he can make a lot more in return